Accelerator-CPU Integration for Heterogeneous SoC Modeling

Explore the integration of accelerators and CPUs in heterogeneous SoC modeling, discussing concepts like DMA, IP integration, and data sharing. Learn about typical DMA flow, cost considerations, co-design versus isolated design, and simulation tools like gem5-Aladdin for system-level studies.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author. If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

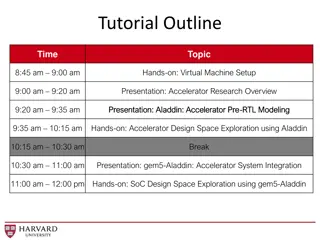

Integration for Heterogeneous SoC Modeling Yakun Sophia Shao, Sam Xi, Gu-Yeon Wei, David Brooks Harvard University 1

Todays Accelerator-CPU Integration Simple interface to accelerators: DMA Easy to integrate lots of IP Hard to program and share data Core L1 $ Core L1 $ Acc #1 Acc #n SPAD SPAD L2 $ On-Chip System Bus DMA DRAM 2

Todays Accelerator-CPU Integration Simple interface to accelerators: DMA Easy to integrate lots of IP Hard to program and share data Core L1 $ Core L1 $ Acc #1 Acc #n SPAD SPAD L2 $ On-Chip System Bus DMA DRAM 3

Typical DMA Flow Flush and invalidate inputdata from CPU caches. Invalidate a region of memory to be used for receiving accelerator output. Program a buffer descriptor describing the transfer (start, length, source, destination). When data is large, program multiple descriptors Initiate accelerator. Initiate data transfer. Wait for accelerator to complete. 4

DMA can be very expensive Only 20% of total time! 16-way parallel md-knn accelerator 5

Co-Design vs. Isolated Design No need to build such an aggressively parallel design! 8

Features End-to-end simulation of accelerated workloads. Models hardware-managed caches and DMA + scratchpad memory systems. Supports multiple accelerators. Enables system-level studies of accelerator-centric platforms. Xenon: A powerful design sweep system. Highly configurable and extensible. 10

DMA Engine Extend the existing DMA engine in gem5 to accelerators. Special dmaLoad and dmaStore functions. Insert into accelerated kernel. Trace will capture them. gem5-Aladdin will handle them. Data is sent back and forth as required. Analytical model for cache flush and invalidation latency. 11

DMA Engine /* Code representing the accelerator */ void fft1D_512(TYPE work_x[512], TYPE work_y[512]){ int tid, hi, lo, stride; /* more setup */ } 12

DMA Engine /* Code representing the accelerator */ void fft1D_512(TYPE work_x[512], TYPE work_y[512]){ int tid, hi, lo, stride; /* more setup */ dmaLoad(&work_x[0], 0, 512 * sizeof(TYPE)); dmaLoad(&work_y[0], 0, 512 * sizeof(TYPE)); } 13

DMA Engine /* Code representing the accelerator */ void fft1D_512(TYPE work_x[512], TYPE work_y[512]){ int tid, hi, lo, stride; /* more setup */ dmaLoad(&work_x[0], 0, 512 * sizeof(TYPE)); dmaLoad(&work_y[0], 0, 512 * sizeof(TYPE)); /* Run FFT here ... */ } 14

DMA Engine /* Code representing the accelerator */ void fft1D_512(TYPE work_x[512], TYPE work_y[512]){ int tid, hi, lo, stride; /* more setup */ dmaStore(&work_y[0], 0, 512 * sizeof(TYPE)); } dmaLoad(&work_x[0], 0, 512 * sizeof(TYPE)); dmaLoad(&work_y[0], 0, 512 * sizeof(TYPE)); /* Run FFT here ... */ dmaStore(&work_x[0], 0, 512 * sizeof(TYPE)); 15

Caches and Virtual Memory Gaining traction on multiple platforms. Intel QuickAssist QPI-Based FPGA Accelerator Platform (QAP) IBM POWER8 s Coherent Accelerator Processor Interface (CAPI) System vendors provide a Host Service Layer with virtual memory and cache coherence support. Host service layer communicates with CPUs through an agent. Processors FPGA QPI/PCIe Core L1 $ Core L1 $ Accelerator Acc Agent Host Service Layer L2 $ 16

Caches and Virtual Memory Accelerator caches are connected directly to system bus. Support for multi-level cache hierarchies. Hybrid memory system: can use both caches and scratchpads. MOESI coherence protocol. Special Aladdin TLB model. Map trace address space to simulated address space. 17

Two ways to run gem5-Aladdin Standalone Aladdin + gem5 memory system models No CPUs in the system Easily test accelerator and memory system designs With-CPU Write user program to invoke one or more accelerators. Evaluate end-to-end workload performance. 18

Validation Implemented accelerators in Vivado HLS Designed complete system in Vivado Design Suite 2015.1. 19

CASE STUDY: REDUCING DMA OVERHEADS 20

DMA Optimization Results Overlap of flush and data transfer 25

DMA Optimization Results Overlap of data transfer and compute 26

DMA Optimization Results md-knn is able to completely overlap computation with communication! 27

CPU Accelerator Cosimulation CPU can invoke an attached accelerator. We use the ioctl system call. Communicate status through shared memory. Spin wait for accelerator, or do something else (e.g. start another accelerator). 29

Code example /* Code running on the CPU. */ void run_benchmark(TYPE work_x[512], TYPE work_y[512]) { } 30

Code example /* Code running on the CPU. */ void run_benchmark(TYPE work_x[512], TYPE work_y[512]) { /* Establish a mapping from simulated to trace * address space */ mapArrayToAccelerator(MACHSUITE_FFT_TRANSPOSE, "work_x", work_x, sizeof(work_x)); ioctl request code Associate this array name with the addresses of memory accesses in the trace. Starting address and length of one memory region that the accelerator can access. } 31

Code example /* Code running on the CPU. */ void run_benchmark(TYPE work_x[512], TYPE work_y[512]) { /* Establish a mapping from simulated to trace * address space */ mapArrayToAccelerator(MACHSUITE_FFT_TRANSPOSE, "work_x", work_x, sizeof(work_x)); mapArrayToAccelerator(MACHSUITE_FFT_TRANSPOSE, "work_y", work_y, sizeof(work_y)); } 32

Code example /* Code running on the CPU. */ void run_benchmark(TYPE work_x[512], TYPE work_y[512]) { /* Establish a mapping from simulated to trace * address space */ mapArrayToAccelerator(MACHSUITE_FFT_TRANSPOSE, "work_x", work_x, sizeof(work_x)); mapArrayToAccelerator(MACHSUITE_FFT_TRANSPOSE, "work_y", work_y, sizeof(work_y)); // Start the accelerator and spin until it finishes. invokeAcceleratorAndBlock(MACHSUITE_FFT_TRANSPOSE); } 33

One accelerator, multiple calls Call an accelerated function in a loop with different data each time. i=1 i=0 i=2 CPU code CPU code CPU code ACCEL ACCEL ACCEL 34

One accelerator, multiple calls Build the trace as usual. Trace will contain all iterations of this loop. i=1 i=0 i=2 ACCEL ACCEL ACCEL call call call ret ret ret 35

One accelerator, multiple calls Aladdin identifies call and ret instructions to mark as boundaries of an invocation. i=1 i=0 i=2 ACCEL ACCEL ACCEL call call call ret ret ret 36

One accelerator, multiple calls Aladdin only reads this part of the trace. Continue as usual. i=1 i=0 i=2 ACCEL ACCEL ACCEL call call call ret ret ret 37

One accelerator, multiple calls On the next iteration, Aladdin resumes reading the trace at the last position. i=1 i=0 i=2 ACCEL ACCEL ACCEL call call call ret ret ret 38

Multiple accelerators Build the trace as usual. Then: Divide them up into separate traces for each kernel. In the user code, we call invokeAccelerator() with a different request code for each accelerator. Easier to distinguish output of different accelerators. Leave it as a single trace. invokeAccelerator() has the same request code each time, even though a different workload is modeled. 39

How can I use gem5-Aladdin? Investigate optimizations to the DMA flow. Study cache-based accelerators. Study impact of system-level effects on accelerator design. Multi-accelerator systems. Near-data processing. All these will require design sweeps! 40

Xenon: Design Sweep System A small declarative command language for generating design sweep configurations. Implemented as a Python embedded DSL. Highly extensible. Not gem5-Aladdin specific. Not limited to sweeping parameters on benchmarks. Why Xenon ? 41

1,000 ft view of Xenon Xenon operates on Python objects and attributes. Define a data model Instantiatethe data model Execute Xenon commands over the data 42

Xenon: Data Model cycle_time pipelining md-knn md_kernel force_x force_y force_z partition_type partition_factor memory_type loop_i unrolling loop_j 43

Xenon: Commands set unrolling 4 set partition_type cyclic set unrolling for md_knn.* 8 set partition_type for md_knn.force_x block sweep cycle_time from 1 to 5 sweep partition_factor from 1 to 8 expstep 2 set partition_factor for md_knn.force_x 8 generate configs generate trace 44

Xenon: Generation Procedure Read sweep configuration file Execute sweep commands Generate all configurations Backend: Generate any additional outputs Backend: read JSON, rewrite into desired format. Export configurations to JSON 45

Xenon: Execute "Benchmark(\"md-knn\")": { "Array(\"NL\")": { "memory_type": "cache", "name": "NL", "partition_factor": 1, "partition_type": "cyclic", "size": 4096, "type": "Array", "word_length": 8 }, "Array(\"force_x\")": { "memory_type": "cache", "name": "force_x", "partition_factor": 1, "partition_type": "cyclic", "size": 256, "type": "Array", "word_length": 8 }, "Array(\"force_y\")": { "memory_type": "cache", "name": "force_y", "partition_factor": 1, "partition_type": "cyclic", "size": 256, "type": "Array", "word_length": 8 } ... Every configuration in a JSON file. A backend is then invoked to load this JSON object and write application specific config files. 46

gem5-aladdin System effects have significant impacts on accelerator performance and design. gem5-Aladdin enables the study of end-to-end accelerated workloads, including data movement, cache coherency, and shared resource contention. Download gem5-Aladdin at: http://vlsiarch.eecs.harvard.edu/gem5-aladdin 47

DEMOS 48

Demo: DMA Exercise: change system bus width and see effect on accelerator performance. Open up your VM. Go to: ~gem5-aladdin/sweeps/tutorial/dma/stencil-stencil2d/0 Examine these files: stencil-stencil2d.cfg ../inputs/dynamic_trace.gz gem5.cfg run.sh 49

Demo: DMA Run the accelerator with DMA simulation Change the system bus width to 32 bits Set xbar_width=4 in run.sh Run again. Compare results. 50