Data-Parallel Programming using SaC: The Multicore Challenge and Beyond

The evolution of data-parallel programming using SaC technology as it meets the challenges of multicore performance, sustainability, and affordability. Delve into scenarios, visions, and examples, understanding the concepts of data-parallelism, algorithm formulation, and the advantages of space over time in coding efficiency. Witness practical applications with Fibonacci numbers and grasp the potential of high-portability visions. Dive deep into tomorrow's computing landscape, driven by OpenCL, VHDL, TC, and MPI/OpenMP.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

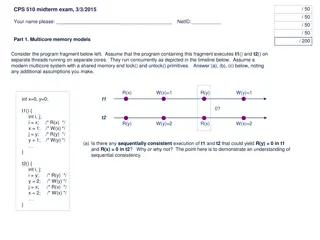

Data-Parallel Programming using SaC lecture 1 F21DP Distributed and Parallel Technology Sven-Bodo Scholz

The Multicore Challenge performance? sustainability? affordability? SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP

Typical Scenario SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP VHDL TC OpenCL MPI/ OpenMP algorithm

Tomorrows Scenario SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP OpenCL VHDL TC algorithm MPI/OpenMP

The High-Portability Vision SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP SVP OpenCL compiler VHDL TC algorithm MPI/OpenMP

What is Data-Parallelism? actor actor actor data structure concurrent operations on a single data structure 5

Data-Parallelism, More Concretly Formulate algorithms in space rather than time! 1 prod = prod( iota( 10)+1) prod = 1; for( i=1; i<=10; i++) { prod = prod*i; } 2 . . . 1 2 10 6 . . . 3628800 3628800

Why is Space Better than Time? Compiler prod( iota( n)) micro threaded code sequential code multi threaded code . . . 1 1 4 7 1 2 10 . . . 2 90 2 20 56 2 . . . . . . 6 6 120 5040 . . . 3628800 3628800 3628800

Another Example: Fibonacci Numbers fib(4) if( n<=1) return n; } else { return fib( n-1) + fib( n-2); } fib( 3) fib(2) fib(1) fib(0) fib(1) fib(2) fib(0) fib(1)

Another Example: Fibonacci Numbers fib(4) if( n<=1) return n; } else { return fib( n-1) + fib( n-2); } fib( 3) fib(2) fib(1) fib(0) fib(1) fib(2) fib(0) fib(1) fib(2) fib(2) fib( 3) fib(4)

Fibonacci Numbers now linearised! fib(4) fib(0) fst: 0 snd: 1 fib(1) if( n== 0) return fst; else return fib( snd, fst+snd, n- 1) fst: 1 snd: 1 fib(1) fib(2) fst: 1 snd: 2 fib(2) fib(3) fst: 2 snd: 3 fib(3) fib(4)

Fibonacci Numbers now data-parallel! matprod( genarray( [n], [[1, 1], [1, 0]])) [0,0] fib(2) fib(1) fib(3) fib(2) fib(4) 1 1 1 0 1 1 1 0 1 1 1 0 fib(3) fib(1) fib(0) 2 1 1 1 1 1 0 1 3 2 2 1

Everything is an Array Think Arrays! Vectors are arrays. Matrices are arrays. Tensors are arrays. ........ are arrays.

Everything is an Array Think Arrays! Vectors are arrays. Matrices are arrays. Tensors are arrays. ........ are arrays. Even scalars are arrays. Any operation maps arrays to arrays. Even iteration spaces are arrays

Index-Free Combinator-Style Computations L2 norm: sqrt( sum( square( A))) Convolution step: W1 * shift(-1, A) + W2 * A + W1 * shift( 1, A) Convergence test: all( abs( A-B) < eps)

Shape-Invariant Programming l2norm( [1,2,3,4] ) sqrt( sum( sqr( [1,2,3,4]))) sqrt( sum( [1,4,9,16])) sqrt( 30) 5.4772

Shape-Invariant Programming l2norm( [[1,2],[3,4]] ) sqrt( sum( sqr( [[1,2],[3,4]]))) sqrt( sum( [[1,4],[9,16]])) sqrt( [5,25]) [2.2361, 5]

Computation of double f( double x) { return 4.0 / (1.0+x*x); } int main() { num_steps = 10000; step_size = 1.0 / tod( num_steps); x = (0.5 + tod( iota( num_steps))) * step_size; y = { iv-> f( x[iv])}; pi = sum( step_size * y); printf( " ...and pi is: %f\n", pi); return(0); } 19

Programming in a Data- Parallel Style - Consequences much less error-prone indexing! combinator style increased reuse better maintenance easier to optimise huge exposure of concurrency!