Energy-Aware Wireless Scheduling with Near-Optimal Backlog and Convergence Time Tradeoffs

This study focuses on energy-aware wireless scheduling strategies with near optimal backlog and convergence time tradeoffs. It explores the challenges and solutions involved in controlling random arrivals and power use in wireless communication systems. Stochastic optimization problems, prior algorithms, and analysis techniques are discussed to achieve efficient power allocation and system performance.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

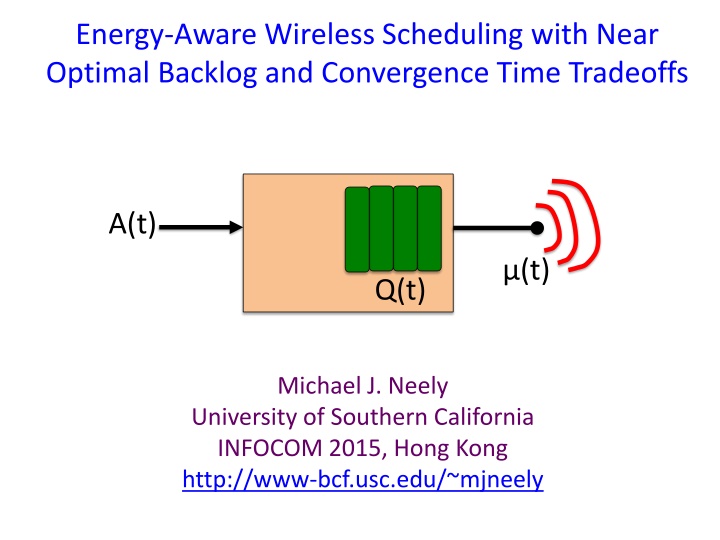

Energy-Aware Wireless Scheduling with Near Optimal Backlog and Convergence Time Tradeoffs A(t) (t) Q(t) Michael J. Neely University of Southern California INFOCOM 2015, Hong Kong http://www-bcf.usc.edu/~mjneely

A Single Wireless Link A(t) (t) Q(t) Q(t+1) = max[Q(t) + A(t) (t), 0]

A Single Wireless Link A(t) (t) Q(t) Q(t+1) = max[Q(t) + A(t) (t), 0] Uncontrolled: A(t) = random arrivals,

A Single Wireless Link A(t) (t) Q(t) Q(t+1) = max[Q(t) + A(t) (t), 0] Uncontrolled: A(t) = random arrivals, Controlled: (t) = bits served [depends on power use & channel state]

Random Channel States (t) (t) t Observe (t) on slot t (t) in {0, 1, 2, , M} (t) ~ i.i.d. over slots ( k) = Pr[ (t) = k] Probabilities are unknown

Opportunistic Power Allocation p(t) = power decision on slot t [based on observation of (t)] Assume: p(t) in {0, 1} ( on or off ) (t) = p(t) (t) Time average expectations: t-1 p(t) = (1/t) E[ p( ) ] =0

Stochastic Optimization Problem Minimize : lim p(t) Subject to: lim (t) p(t) in {0, 1} for all slots t p* = ergodic optimal average power Define: Fix >0. -approximation on slot t if: p(t) p* + (t) - Challenge: Unknown probabilities!

Prior algorithms and analysis E[Q] T O(1/ ) O(1/ 2) Neely 03, 06 (DPP) Georgiadis et al. 06 Neely, Modiano, Li 05, 08: O(1/ 2) O(1/ ) O(log(1/ )) O(1/ 2) Neely 07: Huang et. al. 13 (DPP-LIFO): O(log2(1/ )) O(1/ 2) O(1/ 2) Li, Li, Eryilmaz 13, 15: O(1/ ) (additional sample path results)

Prior algorithms and analysis E[Q] T O(1/ ) O(1/ 2) Neely 03, 06 (DPP) Georgiadis et al. 06 Neely, Modiano, Li 05, 08: O(1/ 2) O(1/ ) O(log(1/ )) O(1/ 2) Neely 07: Huang et. al. 13 (DPP-LIFO): O(log2(1/ )) O(1/ 2) O(1/ 2) Li, Li, Eryilmaz 13, 15: O(1/ ) (additional sample path results) Huang et al. 14:O(1/ 2/3) O(1/ 1+2/3)

Main Results 1.Lower Bound: No algorithm can do better than O(1/ ) convergence time. 2.Upper Bound: Provide tighter analysis to show that Drift-Plus-Penalty (DPP) algorithm achieves: Convergence Time: T = O( log(1/ ) / ) Average queue size: E[Q] O( log(1/ ) )

Part 1: (1/) Lower Bound for all Algorithms Example system: (t) in {1, 2, 3} Pr[ (t) = 3], Pr[ (t) = 2], Pr[ (t) = 1] unknown. Proof methodology: Case 1: Pr[ transmit | (0) = 2 ] > . o Assume Pr[ (t) = 3] = Pr[ (t) = 2] = . o Optimally compensate for mistake on slot 0. Case 2: Pr[ transmit | (0) = 2 ] . o Assume different probabilities. o Optimally compensate for mistake on slot 0.

Case 1: Fix =1, > 0 1 Power E[p(t)] X h( ) curve 0 1 Rate E[ (t)] 0

Case 1: Fix =1, > 0 1 (E[ (0)], E[p(0)]) is in this region. Power E[p(t)] A X 0 1 Rate E[ (t)] 0

Case 1: Fix =1, > 0 1 (E[ (0)], E[p(0)]) is in this region. Power E[p(t)] A X 0 1 Rate E[ (t)] 0

Case 1: Fix =1, > 0 1 (E[ (0)], E[p(0)]) is in this region. Power E[p(t)] A X 0 1 Rate E[ (t)] 0

Case 1: Fix =1, > 0 1 (E[ (0)], E[p(0)]) is in this region. Power E[p(t)] A Optimal compensation Requires time (1/ ). X 0 1 Rate E[ (t)] 0

Part 2: Upper Bound Channel states 0 < 1 < 2< < M General h( ) curve (piecewise linear) Power E[p(t)] p* h( ) curve Rate E[ (t)]

Part 2: Upper Bound Channel states 0 < 1 < 2< < M General h( ) curve (piecewise linear) Power E[p(t)] Transmit iff (t) -1 Transmit iff (t) h( ) curve Rate E[ (t)]

Drift-Plus-Penalty Alg (DPP) (t) = Q(t+1)2 Q(t)2 Observe (t), choose p(t) to minimize: (t) + V p(t) Drift Weighted penalty

Drift-Plus-Penalty Alg (DPP) (t) = Q(t+1)2 Q(t)2 Observe (t), choose p(t) to minimize: (t) + V p(t) Drift Weighted penalty Algorithm becomes: P(t) = 1 if Q(t) (t) V P(t) = 0 else (t) Q(t)

Drift Analysis of DPP Positive drift Negative drift Q(t) 0 V/ k-1 V/ k+1 V/ k Transmit iff (t) Transmit iff (t) -1 0 < 1 < 2< < M

Useful Drift Lemma (with transients) Negative drift: - Z(t) 0 Lemma: E[erZ(t)] D + (erZ(0) D) t transient steady state Apply 1: Z(t) = Q(t) Apply 2: Z(t) = V/ k Q(t)

After transient time O(V) we get: Pr[ Red intervals ] = O(e-cV) Positive drift Negative drift Q(t) 0 V/ k-1 V/ k+1 V/ k Choose V = log(1/ ) Pr[ Red ] = O( )

After transient time O(V) we get: Pr[ Red intervals ] = O(e-cV) Positive drift Negative drift Q(t) 0 V/ k-1 V/ k+1 V/ k

Analytical Result p* But queue is stable, so E[ ] = + O( ). So we timeshare appropriately and: E[Q(t)] O( log(1/ ) ) T O( log(1/ ) / )

Non-ergodic simulation (adaptive to changes)

Conclusions Fundamental lower bound on convergence time o Unknown probabilities o Cramer-Rao like bound for controlled queues Tighter drift analysis for DPP algorithm: o -approximation to optimal power o Queue size O( log(1/ ) ) [optimal] o Convergence time O( log(1/ )/ ) [near optimal]