Equivalence Between Priority Queues and Sorting in External Memory

This study explores the relationship between priority queues and sorting in the context of external memory, shedding light on their equivalence. The work delves into the intricate connections and implications of these fundamental data structures, offering insights into their practical applications and theoretical foundations. By bridging the gap between priority queues and sorting algorithms in external memory settings, the research contributes to advancements in data processing and storage efficiency.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

Equivalence Between Priority Queues and Sorting in External Memory Zhewei Wei Renmin University of China MADALGO, Aarhus University Ke Yi The Hong Kong University of Science and Technology

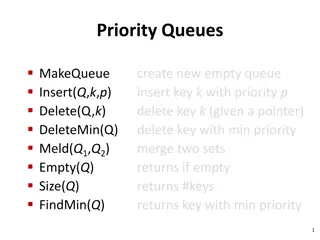

Priority Queue Maintain a set of keys Support insertions, deletions and findmin (deletemin) Fundamental data structure Used as subroutines in greedy algorithms Dijkstra s single source shortest path algorithm Prim s minimum spanning tree algorithm

Sorting to Priority Queue Priority queue can do sorting Given N unsorted keys Insert the keys to the priority queue Perform N deletemin operations (find minimum and delete it) If a priority queue can support insertion, deletion, findmin in S(N) time, then the sorting algorithm runs in O(NS(N)) time.

Priority Queue to Sorting Thorup [2007]: sorting can do priority queue! A sorting algorithm sorts N keys in N*S(N) time in RAM model Use sorting algorithm as a black box A priority queue support all operations in O(S(N)) time O(Nloglog N) sorting -> O(loglog N) priority queue O(N loglog N) sorting -> O( loglog N) priority queue

The I/O Model [Aggarwal and Vitter 1988] Size: M Unlimited size Size: B Block M e m o r y CPU Disk Complexity: # of block transfers (I/Os) CPU computations and memory accesses are free

Cache-Oblivious Model Unlimited size Size: ? Size: ? Block M e m o r y CPU Disk Optimal without knowledge of M and B Optimal for all M and B

Sorting in the I/O Model Sorting bound: Sort(N)= (N/B * logM/BN ) I/Os Treat keys as atoms Upper bound: external merge sort Lower bound: holds for comparison model or indivisibility assumption Conjecture: lower bound holds for B not too small, even without indivisibility assumption

Priority Queue in External Memory I/O model Buffer tree [Arge 1995] M/B-ary heaps [Fadel et. al. 1999] Array heaps[Brodal and Katajainen 1998] O(1/B*logM/BN ) amortized cost Tree-based: do not give any priority queue-to- sorting reduction

Priority Queue in External Memory Cache-oblivious priority queue [Arge et.al. 2002] Keys are moving around in loglog N levels M>B2 O(1/B*logM/BN) with tall cache assumption Reduction: Given an external sorting algorithm that sorts N keys in NS(N)/B I/Os, there is an external priority queue that support all operations in O(S(N)loglog N/B) amortized I/Os

Our Results A sorting algorithm sorts N keys in N*S(N)/B time in the I/O model Use sorting algorithm as a black box S(N) + S(B*log N) + S(B*loglog N)) + A priority queue support all operations in 1/B* i 0S(Blog(i)(N/B)) amortized I/Os S(N)/B for S(N) = (2log*N), or M = (B*log(c)N) Other wise O((S(N) log*N) /B) No new bounds for external priority queue External priority queue lower bound -> external sorting lower bound

Outline How Thorup did it (on a high level) How we extend it in external memory (on a high level) Open problems

Thorups Reduction Word RAM model: each word consists of w log N bits constant number of registers, each with capacity for one word Atomic heap [Han 2004]: support insertions, deletions, and predecessor queries in set of O(log2N) size in constant time

Thorups Reduction O(S(N)*log N) N keys N/2 keys N/4 keys O(log N) levels Invariant: Keys in higher level are larger than keys in Lower level 2c keys Keep min in the head c keys

Thorups Reduction O(S(N)*log N) N keys N/2 keys N/4 keys O(log N) levels Rebalance cost for level 2j: 2j*S(N) # of sorts in N updates: N/2j Amortized cost in level 2j: S(N) log N levels 2c keys c keys Cost: O(S(N)*logN)

Thorups Reduction N/log N base sets N/2log N base sets N/4log N Base sets log N O(log N) levels Split/merge base sets: S(N) amortized Rebalancing level 2j: 2jS(N)/log N # of rebalance in N updates: N/2j Amortized cost for level 2j: S(N)/log N 2 base sets O(S(N)) Amortized cost 1 base sets

Thorups Reduction O(1) cost N/log N base sets N/2log N base sets N/4log N Base sets log N Split/merge base sets: S(N) amortized Rebalancing level 2j: 2jS(N)/log N # of rebalance in N updates: N/2j Amortized cost for level 2j: S(N)/log N Atomic heap of size log N 2 base sets O(S(N)) Amortized cost 1 base sets

Thorups Reduction Buffer size: N/log N N/log N base sets Buffer size: N/2log N N/2log N base sets Buffer size: N/4log N O(S(N)) Amortized cost N/4log N Base sets Atomic heap of size log N Amortized Cost: O(S(N)) O(1) cost 2 base sets Atomic heap of size log N 1 base sets

Externalize Thorups Reduction Where does B come in? How to replace atomic heap? How to handle deletions in external memory?

Where does B come in? Buffer size: N/log N N/Blog N base sets Buffer size: N/2log N N/2Blog N base sets Buffer size: N/4log N B*log N N/4Blog N Base sets Buffer of size B*log N 2 base sets 1 base sets

I/O-efficient Flush Operation Buffer size |R| k substructures Sort keys in buffer: O(R*S(R)/B) Distribute keys to k substructures: O(R/B+k) Total I/O cost: O(RS(N)/B + k) If k =O(R/B), total flush cost is O(RS(N)/B), amortized cost is O(S(N)/B)

Where does B come in? Buffer size: 2j/log N Base sets: 2j/(Blog N) B*log N Amortized I/O cost for flushing level buffers: O(S(N)/B) If a level holds 2jkeys Largest buffer size: 2j/log N Largest # of base sets: 2j/Blog N Smallest base set (head) size: B*log N

Replacing Atomic Heap R = B*log N k = log N Buffer of size B*log N

Replacing Atomic Heap Amortized I/O cost: O(S(N)/B) Recursively build the structure in the head Buffer of size B*log N Head of size O(Blog N)

Recursively Build Layers N keys B*log (N/B) keys B*loglog(N/B) keys O(log*N) Layers Levels rebalancing - Move base sets around - Redistribute buffer - S(N)/(Blog N) for one level - S(N)/B for one layer - S(N)log*N/B amortized I/O cost 2^c*B keys cB keys

Recursively Build Layers N keys B*log (N/B) keys B*loglog(N/B) keys O(log*N) Layers Layers Rebalancing - Rebuild the first (last) level - S(N)/B for one layer - S (N)log*N/B amortized I/O cost 2^c*B keys cB keys

Recursively Build Layers N keys B*log (N/B) keys B*loglog(N/B) keys O(log*N) Layers 2^c*B keys cB keys

Recursively Build Layers R = B k = log* N N keys B*log (N/B) keys B*loglog(N/B) keys Memory buffer of size O(B) 2^c*B keys cB keys

Recursively Build Layers Amortized cost: log*N/B N keys B*log (N/B) keys B*loglog(N/B) keys I/O cost per update: O(S(N)log* N/B) Memory buffer of size O(B) 2^c*B keys cB keys

Handle Deletions Follow a pointer to perform deletion takes 1 I/O per deletion Deleting signals: Delete x -> Insert (-, x) Perform actual deletion afterwards Unlike buffer tree, we don t have access to the leaves (base sets) Invariant: Only process deleting signals in the head

Schedule Avoid repeated sorting If head or memory buffer unbalanced: Flush stage: flush all overflowed buffers and rebalance all unbalanced base sets Push stage: rebalance all overflowed layers and levels (expand) Pull stage: deal with delete signals and rebalance all underflowed layers and levels (shrink)

Open problems Optimal reduction? Priority queue that support insertions/deletions in O(1/B) I/O cost for set of size O(B*log(c)N) New reduction framework Better (than loglog N) reduction in Cache- oblivious model? Hard to do I/O-efficient flushing and rebalancing without knowing B