High Probability Generalization Bounds for Stable Algorithms

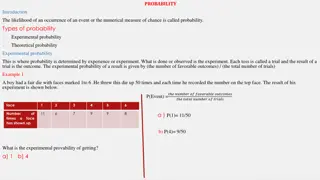

This content delves into high probability generalization bounds for uniformly stable algorithms, showcasing insights on stability and generalization in machine learning. It covers topics such as stability in the face of individual examples, known bounds from previous studies, stochastic convex optimization, and proof approaches like tail boundaries. The content emphasizes the importance of uniformly stable algorithms for learning VC classes with optimal rates, bypassing limitations and requiring innovative tools and ideas.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

High probability generalization bounds for uniformly stable algorithms Vitaly Feldman Brain with Jan Vondrak

Generalization bounds Dataset? = ?1, ,?? ?? Loss function ?,? [0,1] for ? ? Estimation error/generalization gap ? 1 ?= ? ? ? ??,? ? ?=1 ??,?? Learning algorithm ? Model ??

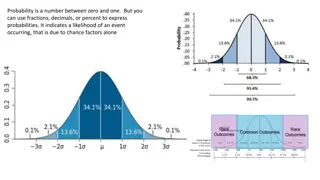

Stability Low sensitivity to individual examples implies generalization [Rogers,Wagner 78; Devroye,Wagner 79, ..] [Bousquet,Elisseeff 01] ? has uniform stability ? wrt if for all ?,? that differ in 1 element and all ? ?: ??,? ?? ,? ? 1 ? ,? 0,1 , e.g. ? = 3

Known bounds [Rogers,Wagner 78; Devroye,Wagner 79] ? ?? ? ? ? 1 2 ? ?? ? ? ? + ? ?[FV 18] [Bousquet,Elisseeff 01] log 1/? ? ?? ? ??| ?| ? ? log 1/? + ?[FV 18] ? [ShalevShvartz,Shamir,Srebro,Sridharan 09] For ERM ? 1 ? ??| ?| ? + ? ? 4

New bound log 1/? ? ?? ? ??| ?| ?log(?)log ?/? + ? Optimal (up to logs) 1 ? Same concentration as fixed model if ? = ? 5

Stochastic convex optimization ?1 ? ? = ?2 For all ? ?, (?,?) is convex 1-Lipschitz in ? Minimize ?? ?? ?[ (?,?)] over ? ?2 1} Approaches: Online-to-batch (single-pass): 1 ? w.h.p. ? ? [F. 16] Uniform convergence: 1 ? Uniform stability: ? = o Regularized ERM [BE 01; SSSS 09] o (Stochastic) gradient descent for smooth func. [Hardt,Recht,Singer 16] 1 ? rate High probability and (nearly) optimal ? 6

Proof approach Tail boundary ??(?,?,?) Smallest ? such that for all ?-uniformly stable ?, : ? [ ?,?] ?? ? ?? ? ? ? Dataset size reduction: for any ? ? ???,?,? ???/??,?,? Range reduction: ???,?,? ??/2 ?,? ?log ?/? ,? +? log(?/?) ? 7

Conclusions Stability and generalization in ML Bypasses limitations of uniform convergence and online-to-batch Limited set of general analysis techniques o Requires new tools and ideas Limited set of algorithmic techniques o Connections to differential privacy o Uniformly stable algorithms for learning VC classes with (nearly) optimal rate [Dagan,F. 19] 8