Introduction to Information Retrieval Basics

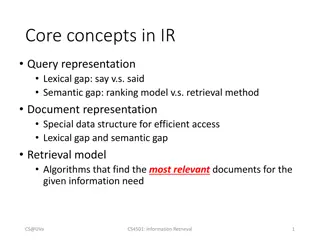

Information Retrieval (IR) involves finding unstructured material to satisfy information needs. Explore term weighting, VSM, incidence matrices, and vectors in IR to locate relevant documents efficiently. Understand the significance of IR in text mining, info retrieval, and knowledge discovery.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

Introduction to Information Retrieval Lecture 1 : Term Weighting and VSM wyang@ntu.edu.tw Introduction to Information Retrieval Ch 1~3, 6 1

Introduction to Information Retrieval Basics to Informational Retrieval 2

Introduction to Information Retrieval Definition of information retrieval Information retrieval (IR) is finding material (usually documents) of an unstructured nature (usually text) that satisfies an information need from within large collections (usually stored on computers). IR is the foundation to Text mining Info Retrieval & Extraction Text Mining Knowledge Discovery Ex. . 3 3

Introduction to Information Retrieval Unstructured data in 1650 Which plays of Shakespeare contain the words BRUTUS ANDCAESAR, but not CALPURNIA ? One could scan all of Shakespeare s plays for BRUTUS and CAESAR, then strip out lines containing CALPURNIA Why is scan not the solution? Slow (for large collections) Advanced operations not feasible (e.g., find the word ROMANS near COUNTRYMAN ) 4 4

Introduction to Information Retrieval Term-document incidence matrix Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 1 1 1 0 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 0 1 1 0 0 1 1 0 0 1 0 0 1 1 1 0 1 0 0 1 0 Entry is 1 if term occurs. Example: CALPURNIA occurs in JuliusCaesar. Entry is 0 if term doesn t occur. Example: CALPURNIA doesn t occur in The tempest. 5 5

Introduction to Information Retrieval Incidence vectors So we have a 0/1 vector for each term. To answer the query BRUTUS AND CAESAR AND NOT CALPURNIA: Take the vectors for BRUTUS, CAESAR AND NOT CALPURNIA Complement the vector of CALPURNIA Do a (bitwise) and on the three vectors 110100 AND 110111 AND 101111 = 100100 6 6

Introduction to Information Retrieval 0/1 vector for BRUTUS Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 1 1 1 0 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 0 1 1 0 0 1 1 0 0 1 0 0 1 1 1 0 1 0 0 1 0 result: 1 0 0 1 0 0 Antony and Cleopatra Hamlet 7 7

Introduction to Information Retrieval Too big to build the incidence matrix Consider N = 106 documents, each with about 1000 tokens total of 109tokens (10 ) Assume there are M = 500,000 distinct terms in the collection M = 500,000 106 = half a trillion 0s and 1s. (5000 ) But the matrix has no more than 109 1s. Matrix is extremely sparse. (only 10/5000 has values) What is a better representations? We only record the 1s. 8 8

Introduction to Information Retrieval Inverted Index For each term t, we store a list of all documents that contain t. dictionary (sorted) postings 9 9

Introduction to Information Retrieval Ranked Retrieval 10

Introduction to Information Retrieval Problem with Boolean search Boolean retrieval return documents either match or don't. Boolean queries often result in either too few (=0) or too many (1000s) results. Example query : [standard user dlink 650] 200,000 hits Example query : [standard user dlink 650 no card found] 0 hits Good for expert users with precise understanding of their needs and of the collection. Not good for the majority of users 11 11

Introduction to Information Retrieval Ranked retrieval With ranking, large result sets are not an issue. More relevant results are ranked higher than less relevant results. The user may decide how many results he/she wants. 12 12

Introduction to Information Retrieval Scoring as the basis of ranked retrieval Assign a score to each query-document pair, say in [0, 1], to measure how well document and query match . If the query term does not occur in the document: score should be 0. The more frequent the query term in the document, the higher the score 13 13

Introduction to Information Retrieval Term Frequency 14

Introduction to Information Retrieval Binary incidence matrix Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 1 1 1 0 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 0 1 1 0 0 1 1 0 0 1 0 0 1 1 1 0 1 0 0 1 0 Each document is represented as a binary vector {0, 1}|V|. 15 15

Introduction to Information Retrieval Count matrix Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 157 73 157 227 10 0 0 0 0 0 3 1 0 2 2 0 0 8 1 0 0 1 0 0 5 1 1 0 0 0 0 8 5 4 232 0 57 2 2 0 0 0 Each document is now represented as a count vector N|V|. 16 16

Introduction to Information Retrieval Here is Bag of words model Do not consider the order of words in a document. John is quicker than Mary , and Mary is quicker than John are represented the same way. 17 17

Introduction to Information Retrieval Term frequency tf The term frequency tft,d of term t in document d is defined as the number of times that t occurs in d. Use tf when computing query-document match scores. But Relevance does not increase proportionally with term frequency. Example A document with tf = 10 occurrences of the term is more relevant than a document with tf = 1 occurrence of the term, but not 10 times more relevant. 18 18

Introduction to Information Retrieval Log frequency weighting The log frequency weight of term t in d is defined as follows tft,d wt,d: 0 0, 1 1, 2 1.3, 10 2, 1000 4, etc. Why use log ? , 1 1 tf-matching-score(q, d) = t q d (1 + log tft,d ) 19 19

Introduction to Information Retrieval Exercise Compute the tf matching score for the following query- document pairs. q: [information on cars] d: all you have ever wanted to know about cars tf = 1+log1 q: [information on cars] d: information on trucks, information on planes, information on trains tf = (1+log3) + (1+log3) 20 20

Introduction to Information Retrieval TF-IDF Weighting 21

Introduction to Information Retrieval Desired weight for frequent terms Frequent terms are less informative than rare terms. Consider a term in the query that is frequent in the collection (e.g., GOOD, INCREASE, LINE). common term or 22 22

Introduction to Information Retrieval Desired weight for rare terms Rare terms are more informative than frequent terms. Consider a term in the query that is rare in the collection (e.g., ARACHNOCENTRIC). A document containing this term is very likely to be relevant. We want high weights for rare terms like ARACHNOCENTRIC. 23 23

Introduction to Information Retrieval Document frequency We want high weights for rare terms like ARACHNOCENTRIC. We want low (still positive) weights for frequent words like GOOD, INCREASE andLINE. We will use document frequency to factor this into computing the matching score. The document frequency is the number of documents in the collection that the term occurs in. 24 24

Introduction to Information Retrieval idf weight dft is the document frequency, the number of documents that t occurs in. dft is an inverse measure of the informativeness of term t. We define the idf weight of term t as follows: (N is the number of documents in the collection.) idft is a measure of the informativeness of the term. [log N/dft ] instead of [N/dft ] to balance the effect of idf (i.e. use log for both tf and df) 25 25

Introduction to Information Retrieval Examples for idf Compute idft using the formula: term calpurnia animal sunday fly under the dft idft 1 6 4 3 2 1 0 100 1000 10,000 100,000 1,000,000 26 26

Introduction to Information Retrieval Collection frequency vs. Document frequency word collection frequency document frequency INSURANCE TRY 10440 10422 3997 8760 Collection frequency of t: number of tokens of t in the collection Document frequency of t: number of documents t occurs in Document/collection frequency weighting is computed from known collection, or estimated Which word is a more informative ? 27 27

Introduction to Information Retrieval Example cf df Word cf df 17 ( ) 3997 ferrari insurance 10422 10440

Introduction to Information Retrieval tf-idf weighting The tf-idf weight of a term is the product of its tf weight and its idf weight. tf-weight idf-weight Best known weighting scheme in information retrieval Note: the - in tf-idf is a hyphen, not a minus sign Alternative names: tf.idf , tf x idf 29 29

Introduction to Information Retrieval Summary: tf-idf Assign a tf-idf weight for each term t in each document d: The tf-idf weight . . . . . . increases with the number of occurrences within a document. (term frequency) . . . increases with the rarity of the term in the collection. (inverse document frequency) 30 30

Introduction to Information Retrieval Exercise: Term, collection and document frequency Quantity Symbol Definition term frequency tft,d number of occurrences of t in d document frequency dft number of documents in the collection that t occurs in collection frequency cft total number of occurrences of t in the collection Relationship between df and cf? Relationship between tf and cf? Relationship between tf and df? 31 31

Introduction to Information Retrieval Vector Space Model 32

Introduction to Information Retrieval Binary incidence matrix Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 1 1 1 0 1 1 1 1 1 1 1 0 0 0 0 0 0 0 0 1 1 0 1 1 0 0 1 1 0 0 1 0 0 1 1 1 0 1 0 0 1 0 Each document is represented as a binary vector {0, 1}|V|. 33 33

Introduction to Information Retrieval Count matrix Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 157 73 157 227 10 0 0 0 0 0 3 1 0 2 2 0 0 8 1 0 0 1 0 0 5 1 1 0 0 0 0 8 5 4 232 0 57 2 2 0 0 0 Each document is now represented as a count vector N|V|. 34 34

Introduction to Information Retrieval Binary count weight matrix Anthony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth . . . ANTHONY BRUTUS CAESAR CALPURNIA CLEOPATRA MERCY WORSER . . . 5.25 1.21 8.59 0.0 2.85 1.51 1.37 3.18 6.10 2.54 1.54 0.0 0.0 0.0 0.0 0.0 0.0 0.0 0.0 1.90 0.11 0.0 1.0 1.51 0.0 0.0 0.12 4.15 0.0 0.0 0.25 0.0 0.0 5.25 0.25 0.35 0.0 0.0 0.0 0.0 0.88 1.95 Each document is now represented as a real-valued vector of tf idf weights R|V|. 35 35

Introduction to Information Retrieval Documents as vectors Each document is now represented as a real-valued vector of tf-idf weights R|V|. So we have a |V|-dimensional real-valued vector space. Terms are axes of the space. Documents are points or vectors in this space. Each vector is very sparse - most entries are zero. Very high-dimensional: tens of millions of dimensions when apply this to web (i.e. too many different terms on web) 36 36

Introduction to Information Retrieval Vector Space Model

Introduction to Information Retrieval Vector Space Model Antony Brutus D1: Antony and Cleopatra = (13.1, 3.0) D2: Julius Caesar = (11.4, 8.3) term B 13.1x11.4 + 3.0 x 8.3 D1 * d D2 * 0 . 3 ( + ) 3 . 8 2 2 13 ( 1 . 11 ) 4 . term A

Introduction to Information Retrieval Applications of Vector Space Model ( ) Clustering ( ) Classification Cluster 1 term B Centroid * * * * * * * * * ( ) * * * * centroid * * * * * * * Cluster 2 * * * * Cluster 3 * * * * * * * * * * * * * term A

Introduction to Information Retrieval Issues about Vector Space Model (1) (orthogonal) tornado, apple D1=(1,0) D2=(0,1) 0 tornado, hurricane D1=(1,0) D2=(0,1) 0 (dependence)

Introduction to Information Retrieval Issues about Vector Space Model (2) ( , curse of dimensionality) feature selection latent semantic indexing document as a vector Antony and Cleopatra Julius Caesar The Tempest Hamlet Othello Macbeth Antony 13.1 11.4 0.0 0.0 0.0 0.0 term as axes Brutus 3.0 8.3 0.0 1.0 0.0 0.0 Caesar 2.3 2.3 0.0 0.5 0.3 0.3 Calpurnia 0.0 11.2 0.0 0.0 0.0 0.0 Cleopatra 17.7 0.0 0.0 0.0 0.0 0.0 mercy 0.5 0.0 0.7 0.9 0.9 0.3 worser 1.2 0.0 0.6 0.6 0.6 0.0 the dimensionality is 7

Introduction to Information Retrieval Queries as vectors Do the same for queries: represent them as vectors in the high-dimensional space Rank documents according to their proximity to the query proximity = similarity negative distance Rank relevant documents higher than nonrelevant documents 42 42

Introduction to Information Retrieval Use angle instead of distance Rank documents according to angle with query For example : take a document d and append it to itself. Call this document d . d is twice as long as d. Semantically d and d have the same content. The angle between the two documents is 0, corresponding to maximal similarity . . . . . . even though the Euclidean distance between the two documents can be quite large. 43 43

Introduction to Information Retrieval From angles to cosines The following two notions are equivalent. Rank documents according to the angle between query and document in decreasing order Rank documents according to cosine(query,document) in increasing order 44 44

Introduction to Information Retrieval Length normalization A vector can be (length-) normalized by dividing each of its components by its length here we use the L2 norm: This maps vectors onto the unit sphere . . . . . . since after normalization: As a result, longer documents and shorter documents have weights of the same order of magnitude. Effect on the two documents d and d (d appended to itself) : they have identical vectors after length-normalization. 45 45

Introduction to Information Retrieval Cosine similarity between query and document qi is the tf-idf weight of term i in the query. diis the tf-idf weight of term i in the document. | | and | | are the lengths of and This is the cosine similarity of and . . . . . . or, equivalently, the cosine of the angle between and 46 46

Introduction to Information Retrieval Cosine similarity illustrated 47 47

Introduction to Information Retrieval Cosine: Example How similar are these novels? SaS: Sense and Sensibility PaP:Pride and Prejudice WH: Wuthering Heights term frequencies (counts) term SaS PaP WH AFFECTION JEALOUS GOSSIP WUTHERING 115 10 58 7 0 0 20 11 6 38 2 0 48 48

Introduction to Information Retrieval Cosine: Example term frequencies (counts) log frequency weighting term SaS 3.06 2.0 1.30 PaP 2.76 1.85 WH 2.30 2.04 1.78 2.58 term SaS PaP WH AFFECTION JEALOUS GOSSIP WUTHERING AFFECTION JEALOUS GOSSIP WUTHERING 115 10 58 7 0 0 20 11 6 38 0 0 2 0 0 (To simplify this example, we don't do idf weighting.) 49 49

Introduction to Information Retrieval Cosine: Example log frequency weighting log frequency weighting & cosine normalization term term SaS PaP WH SaS PaP WH AFFECTION JEALOUS GOSSIP WUTHERING 3.06 2.0 1.30 2.76 1.85 2.30 2.04 1.78 2.58 AFFECTION JEALOUS GOSSIP WUTHERING 0.789 0.515 0.335 0.0 0.832 0.555 0.0 0.0 0.524 0.465 0.405 0.588 0 0 0 cos(SaS,PaP) 0.789 0.832 + 0.515 0.555 + 0.335 0.0 + 0.0 0.0 0.94. cos(SaS,WH) 0.79 cos(PaP,WH) 0.69 Why do we have cos(SaS,PaP) > cos(SaS,WH)? 50 50