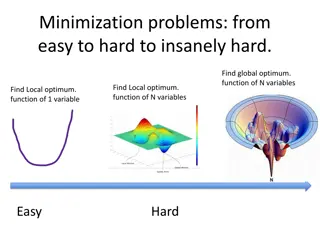

Optimizing Neural Networks with Parallel Methods

Discover how parallel methods can enhance the training of neural networks, overcoming the limitations of traditional optimization techniques like Stochastic Gradient Descent. Explore topics such as alternating minimization, equivalent problems, and incorporating L_2 regulation for improved performance.

Uploaded on | 0 Views

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author. If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

Parallel Methods for Neuron Network Haihao Lu andYuanchu Dang

Machine Learning andArtificial Neuron Network

Problem of Interest: ANN W is the weight b is the bias is activation function x is feature y is label l is loss function

Drawback of SGD SGD is a first-order method,thus it converges slow. SGD suffers from vanishing gradient problem Most importantly,it is hard to parallelize SGD

Equivalent Problem Relaxed Problem

Alternating Minimization W-update z-update one or more steps of damped Newton cheap to compute Hessian parallel computing

RNN Equivalent Problem Relaxed Problem

With L_2 Regulation W-update z-update one or more steps of damped Newton cheap to compute Hessian parallel computing

Code neuralNetwork.jl https://github.com/Yuanchu/neuralNetwor k