Voice Application Retrieval System for Improved Spoken Language Understanding

Enhancing spoken language understanding, this system focuses on aligning paraphrases in voice applications to improve retrieval efficiency. By proposing a skill retrieval service for unclaimed utterances, it aims to reduce failures due to model limitations or routing errors. Using a two-step approach involving paraphrase detection and skill retrieval, the system enhances relevancy prediction for suggested fallback skills. Leveraging methods like Jaccard Similarity and Siamese networks, it efficiently selects paraphrase candidates and aligns labels through cross-entropy and alignment losses in the retrieval process.

Download Presentation

Please find below an Image/Link to download the presentation.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author. If you encounter any issues during the download, it is possible that the publisher has removed the file from their server.

You are allowed to download the files provided on this website for personal or commercial use, subject to the condition that they are used lawfully. All files are the property of their respective owners.

The content on the website is provided AS IS for your information and personal use only. It may not be sold, licensed, or shared on other websites without obtaining consent from the author.

E N D

Presentation Transcript

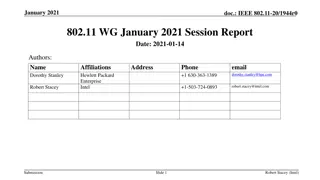

Paraphrase Label Alignment for Voice Paraphrase Label Alignment for Voice Application Retrieval in Spoken Application Retrieval in Spoken Language Understanding Language Understanding Zheng Gao Applied Scientist Amazon Alexa AI

Background Alexa Systems: respond to user requests by routing to related applications. Utterance: the voice request issued by users. 1P/3P skills: 1P skill (also called as domain) includes all Alexa developed skills, such as Calendar, News, etc. 3P skill includes all non-Alexa developed skills, such as Uber, Lyft, etc. More examples: https://www.amazon.com/alexa-skills/b?ie=UTF8&node=13727921011 Example: Utterance: alexa, play today s hits Response: Pandora application will be invoked 2

Research Question Goal: To avoid unnecessary utterance failures in SLU systems such as model incapability or routing errors, we propose a skill retrieval system as a downstream service to suggest fallback skills to unclaimed utterances. Input: unclaimed utterances Output: suggested fallback skills Keywords: data augmentation, pseudo label alignment 3

Method Two Step Approach Paraphrase detection: detect paraphrases from claimed utterances Skill retrieval: retrieve skills via a customized loss function aligning paraphrase launched skills. In the testing stage, we only apply the skill retrieval step on unclaimed utterances to predict relevancy labels of their Shortlisting skills and retrieve the skill with the highest prediction score. Claimed utterances and the paraphrase detection step are not involved. 4

Paraphrase Detection Raw Selection Word level similarity: Jaccard Similarity Semantic similarity: Pretrained Bert embedding + FAISS model Pairwise Matching Siamese network: build on claimed utterances to detect utterance pairwise relationship Given an utterance, select all claimed utterances with matching score (raw selection score + pairwise matching score) above threshold as the paraphrase candidate set. 5

Skill Retrieval Shortlisting TF-IDF model in Elasticsearch Reranking Utterance and Skill Encoder Sequence Labeling Cross Entropy Loss + Label Alignment Loss Loss Function Cross entropy loss: predicted label sequence score with ground truth Label alignment loss: minimize their prediction scores' KL divergence on the skills with positive labels in paraphrases but not in original utterance. 6

Datasets Dataset en-US and en-CA are unclaimed English utterances from devices in the United States and Canada respectively. The third dataset is claimed English utterances from devices in both countries. In dataset en-US and en-CA In the end, we collect 1 million unclaimed utterances in en-US, 80 thousand unclaimed utterances in en-CA, and 16 million claimed utterances in both countries. 7

Paraphrase Detection Evaluation Encoder Structure Exploration: TinyBERT, BERT, AlexaBERT, CharCNN, GloVe, ELMo in Siamese network structure. Comparison Results: the relative change of each baseline score to the best model score. 8

Skill Retrieval Evaluation Model Variants: TF-IDF Listwise Listwise + PL (paraphrase labels) Listwise + Co (combined claimed and unclaimed training data) Faiss + JS + SR (truncated model with Faiss model + Jaccard similarity + skill retrieval step) Siamese + SR (truncated model with Siamese network and skill retrieval step ) Evaluation Metrics: Precision, recall, F1 and NDCG 9

Thanks! Thanks!